AI gets better with more data. The data that matters most is data you can't move.

Scaleout Edge brings machine learning to where the data lives. Training models on distributed devices without raw data ever leaving its source.

The Fundamental Challenge

Why Edge AI Fails with Conventional ML

Standard ML assumes you can centralize data, train in the cloud, and deploy a static model. At the edge (across defense, industrial, and regulated environments) none of those assumptions hold.

The data can't move.

Privacy law, classification boundaries, and bandwidth costs make centralization impractical or illegal. This isn't a policy preference, it's a constraint that disqualifies most ML architectures before they start.

The network can't be trusted.

Contested environments, remote sites, and mobile fleets operate where connectivity is intermittent or denied. Any AI system that requires a live cloud link to function isn't mission-critical, it's a single point of failure.

One model doesn't fit the fleet.

A model trained on centralized, averaged data washes out local patterns. Edge environments are genuinely different operating contexts, not noisy copies of a single distribution. And they shift faster than centralized retraining cycles can follow.

The fleet is a black box.

Thousands of nodes running models independently with no central record of which version ran where, what data shaped it, or when it last updated. In regulated or safety-critical systems, that's not an ops problem, it's an accountability void.

Offline by necessity

Offline by necessity

Distributed fleet

Distributed fleet

Site-bound operations

Site-bound operations

Regulatory boundaries

Regulatory boundaries

Drone

Vehicle

Sensor

Base Services

How Scaleout Edge Works

The model goes to the data. Not the other way around.

Scaleout Edge decentralizes the training process to where data lives. The architecture is designed around four principles, each a direct response to the constraints of edge deployment.

Answers: One model doesn't fit the fleet

Continuous training on live data

Instead of centralized retraining cycles, edge nodes train directly on local data as conditions change. Any ML code is packaged into structured Compute Packages and distributed to the fleet for continuous, autonomous learning that adapts to each node's environment. Nodes can also fine-tune the shared global model on their own local data, personalising it to their specific context without affecting the shared baseline.

Answers: The data can't move

Only encrypted updates leave the device

Raw data is architecturally confined to the edge. What travels to the aggregation layer are encrypted model weight adjustments, not sensor feeds or telemetry. Sovereignty is enforced by system design, not access control policy. Federated learning is formally recognised as a Privacy-Enhancing Technology (PET), consistent with GDPR, HIPAA, and data residency requirements by design.

Answers: The network can't be trusted

Designed for denied connectivity

The platform never assumes a stable connection. Nodes train independently and sync updates when connectivity allows. A drone that loses signal mid-mission continues learning; when it reconnects, its updates merge automatically. Combiners can also be deployed at the edge itself for hierarchical topologies where no central aggregation point is reachable.

Answers: The fleet is a black box

Immutable audit trail across the fleet

The control layer captures training metrics, model provenance, and node health across every device. Every model version is recorded with its compute package hash and session lineage. The accountability chain required for regulated and safety-critical deployments.

-

1

Package. ML code is wrapped into a Compute Package and distributed to edge nodes.

-

2

Train locally. Each node trains on its private data. Raw data never leaves the device.

-

3

Aggregate. Encrypted weight updates flow to Combiners. The Reducer in the Control Layer merges combiner outputs into the next global model, committed to the registry.

-

4

Propagate. The improved model is pushed back to the fleet. The cycle repeats continuously.

Integration

Not a replacement. An extension.

Scaleout Edge sits between your existing ML development environment and your edge fleet. Your frameworks, cloud platforms, and MLOps tooling stay in place. We handle the distributed execution layer.

Your ML code stays in the framework you already use. Scaleout wraps it into a Compute Package for edge distribution, no rewrite required.

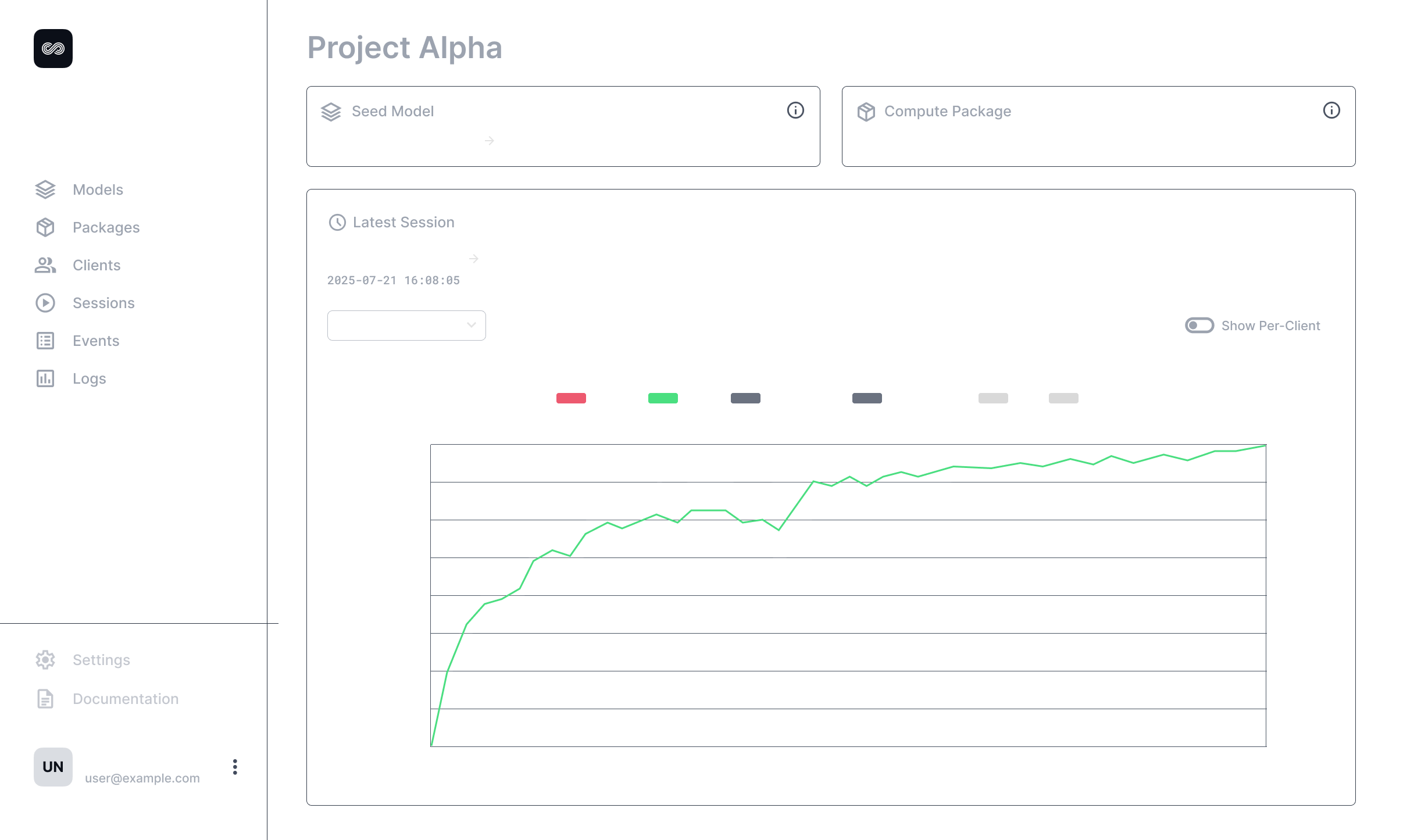

Orchestrate models & fleets

- REST API & Web UI: Manage sessions, models, and fleet configuration. No custom client required.

- Model Registry: Version, stage, and distribute models. Supports import/export for interop with MLFlow and existing pipelines.

- Observability Backend: Fleet-wide dashboards for training metrics, node health, and model lineage and model lineage. Metrics export to Grafana, Prometheus, and Datadog.

Server-side components run on any standard cloud or on-prem infrastructure. No proprietary runtime required. Can be deployed entirely within a controlled perimeter (on-premises, national cloud, or air-gapped) with no dependency on external SaaS services.

Run on constrained hardware

Lightweight SDKs that run on constrained hardware. Three languages, minimal dependencies.

- Python SDK: Full training and inference. For devices with standard compute, Jetson, x86 edge servers, on-prem nodes.

- C++ SDK: For embedded systems and resource-constrained hardware where Python isn't viable.

- Kotlin SDK: For Android-based edge devices and mobile platforms.

Built-in local telemetry captures CPU/GPU load, memory, battery, and training metrics with no additional instrumentation.

Edge AI Modules

Don't start from scratch.

Pre-built, federated-ready modules for the most common edge AI domains. Each module includes model architectures, training configurations, and reference workflows. Designed to compress months of integration into days.

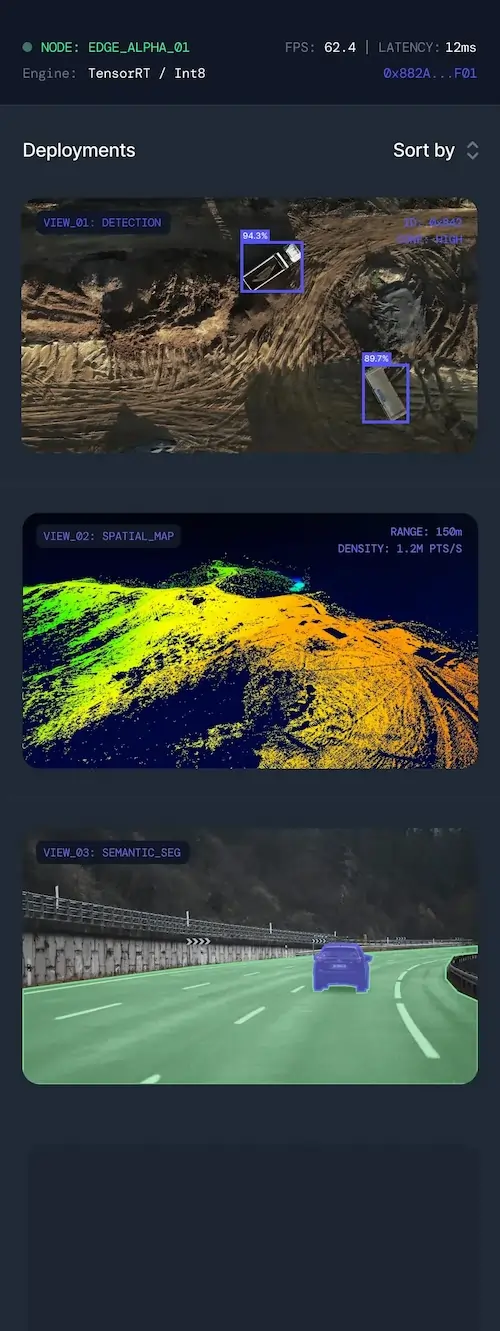

Perception (Computer Vision)

Federated-ready model architectures for detection, classification, and segmentation. Supports continuous improvement of vision models across distributed fleets via federated fine-tuning. Each node learns from its local environment without sharing raw imagery.

Tactical Edge

Modular toolkit for unmanned vehicles featuring on-drone inference with offline caching and sync, autonomous flight ops for recon and engagement, and swarm coordination for shared state and tasking with ground station integration.

Adversarial Modeling

Test whether your federated models leak private data. Privacy auditing covers Model Inversion (MInvA), Membership Inference (MIA), Gradient Inversion, and Synthetic Data Attacks. Adversarial simulations include Data Poisoning, Backdoor, and Label Flipping.

ASR (Speech & Language)

Federated fine-tuning of speech and language models on local private data. Reference workflows for Whisper, Wave2vec and transformer-based LLMs, with edge-optimized deployment targeting hardware like NVIDIA Jetson.

# Configure ASR for secure edge deployment

import scaleout_edge as soe

from transformers import WhisperForConditionalGeneration

# Load base model for fine-tuning

model = WhisperForConditionalGeneration.from_pretrained("openai/whisper-large-v3")

# Initialize Federated Fine-tuning

trainer = soe.FederatedTrainer(

model=model,

dataset="/data/local_voice_records",

strategy="fed_avg"

)

# Compile for hardware target

optimized_model = soe.compile(

target="nvidia_jetson_orin",

precision="int8",

enable_tensor_rt=True

)

optimized_model.deploy(port=8080)

Use Cases

One platform. Four operational contexts.

From contested defense environments to regulated industries, a consistent pattern: distributed data that cannot move and AI that must keep improving.

Autonomous Intelligence in Denied Environments

Drones, unmanned systems, and forward-deployed sensors operating in contested or bandwidth-denied environments where cloud connectivity is a tactical liability.

- •On-device training directly on sensor streams. Intelligence generated at the source, not relayed to a data center.

- •Model updates aggregate locally across the swarm; sensitive raw data never crosses classification boundaries.

- •Node loss or signal disruption does not halt the training cycle.

One Organization. Many Sites. Data That Can't Leave Any of Them.

Oil & gas platforms, mines, and energy grids where each facility's operational data is contractually or legally bound to its physical location, but the model still needs to improve across the entire network.

- •Predictive maintenance and anomaly detection trained on live sensor streams at each site. No operational data crosses facility boundaries.

- •Model improvements federate across a global network of sites when connectivity allows, whether a North Sea platform or a remote mine.

- •Per-site data residency requirements met architecturally, not through access controls that can be misconfigured or overridden.

Fleet-Wide Learning Without Bottlenecks

Thousands of vehicles generating terabytes of telemetry daily. Too much to centralize, too valuable to ignore.

- •Safety and intrusion detection models improve from each vehicle's own data stream, building collective fleet intelligence without exposing individual routes or operator behavior.

- •Only compressed weight updates transmit. Orders of magnitude less than raw telemetry.

Federated Intelligence Without Data Exchange

High-value datasets in government, healthcare, and finance siloed by privacy law and classification boundaries. Data cannot move, but insight must.

- •Collectively train better models across institutions without any party seeing another's raw data or proprietary information.

- •GDPR and HIPAA compliance enforced architecturally. Data physically cannot leave its jurisdiction.

Your data. Your infrastructure. Your models.

Scaleout Edge is deployed as a single-tenant instance. On your infrastructure, under your control. We work with your team to scope the deployment, from initial pilot to production fleet.

How Pricing Works. Pricing adjusts based on deployment scope (active environments and aggregation points), node capacity (concurrent edge devices), and support tier.